We will be moving to a new server in the next month so the website might be down for a bit.

Tips For Requesters On Mechanical Turk

Sunday, July 23, 2023

Tuesday, March 16, 2021

Academic Survey Publishing

Successfully publishing an academic survey on Mturk can be an exhilarating experience. Watching the participants submit and monitoring the progress of your study is truly exciting. When it comes time to examine the data though, some researchers are left disenchanted with the platform because of poor quality responses or comments which reveal there is a lot to learn on Mturk.

Three keys to being a quality requester on Mturk

1. Your reputation matters on Mturk. On the participant side, Amazon has built in an approval percentage. It is a generic pop-up on the worker side which lets participants know if you are going to reject their work or approve it. Many independent worker review sites are also linked to Mturk to allow participants a forum to report requesters who are behaving in an unethical manner. Only reject a participant's submission if it is outright theft. If it is just poor quality data, add them to a disqualifier qualification and do not allow them to participate in any future studies.

2. Use qualifications wisely or even better, screen participants prior to allowing access. You could use Amazon's premium qualifications, but these are just a way for Amazon to reduce your budget. Pay a small amount and screen participants yourself. Have them self report their demographics and when your initial small screening study is completed, send them an invitation to your main study via a separate HIT.

We have found that prescreening reduces unusable responses to less than 1%. If using qualifications alone, always set to a minimum of 98-99% approval or greater. Mturk is a workplace, not a classroom. The participants who do not read instructions, answer randomly and are inattentive are the ones you will find under the 98% approval percentage.

3. Always be civil in any email correspondence. We know that survey design takes long hours of hard work and it is easy to take critical emails personally. You may receive emails from participants that seem to attack you personally or your work. Sometimes no response is the best response but if a response is required, always be professional and do your best to respond in a professional manner.

As always if you do not want to deal with the complexities of self publishing on mturk, contact us at design@mturkdata.com

Thursday, August 22, 2019

New attrition considerations when publishing longitudinal studies on Mturk

New attrition considerations when publishing longitudinal academic studies on Mturk. @amazonmturk is weeding out "nefarious participants" on mturk and it is causing larger attrition rates on academic studies. We lost 6% of our pool over three weeks from removed participants. We lost a little less than 1% of our participants from a screening qualification with a two day separation between qualification and study. These numbers used to be negligible. Maybe three or four out of 1000, but is now increasing at staggering rates.

For a study we published this week the researcher lost around $40 in financial cost, but in terms of data loss of potential responses, the cost is higher. These 20 participants preformed well on the first study and were invited back to the second part of the study. So right when we publish we are down 20 participants from our 330 data set reducing our return rate significantly. Right off the bat we are now looking at 85% of 310 instead of 330. From our point of view there is no reason for a 99% approval participant from the USA with over 1000 HITs completed to be removed from our data set. Amazon should know in 1000 HITs completed if they have a problematic worker.

Some requesters may look at this as a positive outcome of the BOT panic a year ago but we do not. We knew these were just poor quality workers in combination with requesters using insufficient qualifications. The fake bot panic might have been a kick in the pants for Amazon to remove some of these bad actors and clean up the marketplace or at least monitor it more closely. From our point of view, there was not a problem to begin with.

How do you know a participant was removed from Mturk?

When sending your email to their worker ID, the API will return something like this

"NotifyWorkersFailureCode": "HardFailure",

"NotifyWorkersFailureMessage": "Cannot send notification to non-active end points",

"WorkerId": "A2SVLXXXXXXXXX"

Depending on the time between studies, the average return rate is from 85-90% from study to study. Time between the different parts of a study is a factor in return rate, but 85-90% used to be a reasonable average using quality participants. We have some longer gap longitudinal studies coming up in the next month or so and we will update this with new statistics.

EDIT : Our month publishing separation longitudinal study did not have a single removed participant.

The difference between the three week separation study and the month separation study was we were deep in the mturk pool on the three week study so there were many new mturk workers involved in it. Meaning we had already run the study with 4000 participants so they were not allowed to participate. With 4000 removed from the Mturk pool, many new "to Mturk" workers were participating and Amazon was actively deleting any nefarious ID's.

For a study we published this week the researcher lost around $40 in financial cost, but in terms of data loss of potential responses, the cost is higher. These 20 participants preformed well on the first study and were invited back to the second part of the study. So right when we publish we are down 20 participants from our 330 data set reducing our return rate significantly. Right off the bat we are now looking at 85% of 310 instead of 330. From our point of view there is no reason for a 99% approval participant from the USA with over 1000 HITs completed to be removed from our data set. Amazon should know in 1000 HITs completed if they have a problematic worker.

Some requesters may look at this as a positive outcome of the BOT panic a year ago but we do not. We knew these were just poor quality workers in combination with requesters using insufficient qualifications. The fake bot panic might have been a kick in the pants for Amazon to remove some of these bad actors and clean up the marketplace or at least monitor it more closely. From our point of view, there was not a problem to begin with.

How do you know a participant was removed from Mturk?

When sending your email to their worker ID, the API will return something like this

"NotifyWorkersFailureCode": "HardFailure",

"NotifyWorkersFailureMessage": "Cannot send notification to non-active end points",

"WorkerId": "A2SVLXXXXXXXXX"

Non-active end points means the worker ID does not exist, but we know it did exist in the past because we have it in our CSV and database.

Depending on the time between studies, the average return rate is from 85-90% from study to study. Time between the different parts of a study is a factor in return rate, but 85-90% used to be a reasonable average using quality participants. We have some longer gap longitudinal studies coming up in the next month or so and we will update this with new statistics.

EDIT : Our month publishing separation longitudinal study did not have a single removed participant.

The difference between the three week separation study and the month separation study was we were deep in the mturk pool on the three week study so there were many new mturk workers involved in it. Meaning we had already run the study with 4000 participants so they were not allowed to participate. With 4000 removed from the Mturk pool, many new "to Mturk" workers were participating and Amazon was actively deleting any nefarious ID's.

Tuesday, June 4, 2019

The depreciation of the Mturk API

If you are not a coder or if you are not an experienced requester on Mturk, this is going to cause huge problems for you. Read the Mturk Blog for more information.

If you need help, contact us.

Saturday, August 11, 2018

The BOT problem on Mturk

Last week the academic community was shocked when a researcher noticed the GPS data from one of their studies on Qualtrics had multiple submissions from the same GPS location. This went viral and everyone started checking their past studies to see if they had similar data. The assumption quickly spread that there were bots completing studies on Mturk. Numerous posts on Facebook, NewScientist, and Reddit have been fueling the fire. It is highly unlikely that half of the Mturk participant pool is bots as some are suggesting. It is more likely these are inexperienced researchers posting posting numbers from 25-50% are Bots. Having experience on Mturk is essential to avoiding the pitfalls associated with problematic participants. With the instructions below you can solve the bot problem yourself.

Most of the reported problems come from using poor qualifications upfront.

1. Use 99% approval percentage an above - not the 95% commonly used by universities. In an academic setting, 95% is an A. In a professional setting, 95% is abysmal. Many studies are published on Mturk using 80-95% and some using no qualifications at all. Your study WILL fill using 99% and above if you pay a fair wage.

Most of the reported problems come from using poor qualifications upfront.

1. Use 99% approval percentage an above - not the 95% commonly used by universities. In an academic setting, 95% is an A. In a professional setting, 95% is abysmal. Many studies are published on Mturk using 80-95% and some using no qualifications at all. Your study WILL fill using 99% and above if you pay a fair wage.

2. Use location USA or USA and Canada only. Social security numbers verified by Amazon for federal tax purposes- each worker ID is attached to a single participant. If Amazon removes the participant from the platform, they will not be allowed to get another account. There are many husbands, wives and adult children working on Mturk along with friends and roommates. Participants discuss Mturk and how they earn money on the platform, so duplicate IP addresses are possible and not cause for alarm when these are usually from referrals.

3. Use HITs approved >1000 which will remove new accounts that could be compromised.

4. Use a single qualification block list. When you reject a participant for attention check failure, gibberish in writing prompts, impossible timing, add them to a qualification and do not allow them to participate for you in the future.

Many of the posts causing concern are regarding the GPS location of participants. How can Qualtrics record GPS location when the participant has it turned off, or when the participant is using privacy add ons, do not track, or a VPN for a more secure environment? Qualtrics uses the IP address to give a general GPS location when one cannot be found. This is why so many multiple locations seem similar.

The easiest solution to remove bots from your data is to add a simple captcha or two to your study like "What is 12-8?". If you are using Qualtrics you can incorporate reCAPTCHA directly into your study as well.

If the simple captcha does not seem secure enough, you can write your question in a jpeg. where the bot would have to read the text in the image file then give the correct answer to the question. A paragraph of text with a specific instruction on writing a sentence below is another good check. Not only does this screen out inattentive participants, it also screens out bots because if they do write something, it is usually nonsense ("VERY GOOD STUDY" etc) .

If your university does not allow you to reject participants because of ethical concerns, you can add them to a qualification so they do not participate for you in the future. Yes, you do end up paying them once, but you will not pay the same fraudulent participant again on another study.

We have published well over 1 million HITs over the last 4 years with close to 80,000 unique participants and our block list is just under 2000 or 2.5%. That 2.5% number is from using very high qualifications, imagine what would happen if you used no qualifications or 95%?

The amount of time and effort involved in developing your research should not be ruined with poor responses. Hopefully the tips above help you in your research. As always if you need experienced help with your data collection contact me at joe@mturkdata.com

Article on Wired about the bot scare https://www.wired.com/story/amazon-mechanical-turk-bot-panic/

Article on Wired about the bot scare https://www.wired.com/story/amazon-mechanical-turk-bot-panic/

Tuesday, July 18, 2017

Using Attention Checks in Your Surveys May Harm Data Quality

Many researchers who are new to online studies ask us about using attention checks in their studies. Our view is that they can be helpful to eliminate random clickers, but should not be used as a guarantee for quality data.

A proper attention check needs to be straightforward and not ambiguous. They should not be designed to trick participants, but can require reading a block of text to ensure participants are reading instructions. We do not advise using memory checks as attention checks because they only gauges the participants memory, not if they are paying attention.

As far as Mturk goes, using high qualifications and time tested removal of problematic participants is the best way to ensure quality data.

Below is a link to a new article published on the Qualtrics blog about attention checks. It is a short and interesting read which we will continue to follow as they make advancements in this area.

https://www.qualtrics.com/blog/using-attention-checks-in-your-surveys-may-harm-data-quality/

A proper attention check needs to be straightforward and not ambiguous. They should not be designed to trick participants, but can require reading a block of text to ensure participants are reading instructions. We do not advise using memory checks as attention checks because they only gauges the participants memory, not if they are paying attention.

As far as Mturk goes, using high qualifications and time tested removal of problematic participants is the best way to ensure quality data.

Below is a link to a new article published on the Qualtrics blog about attention checks. It is a short and interesting read which we will continue to follow as they make advancements in this area.

https://www.qualtrics.com/blog/using-attention-checks-in-your-surveys-may-harm-data-quality/

Friday, February 17, 2017

HITs not getting completed? You may be blocked!

We know that if a worker is blocked, Amazon sends a nasty gram to the worker telling them their account is in jeopardy. It is not a good practice to block workers because it will impact your future work completion times and your reputation as a requester. The best way to deal with workers who are not preforming up to standards is to add them to a qualification to disallow them from participating in your HITs in the future.

Did you know that workers can block you as a requester? It can be difficult for turkers to find well paid work from requesters who have a good reputation. Many will use scripts to weed out the bad requesters and find well paid work. These scripts can block your requester account so that your HITs are never seen by a majority of the workforce.

We polled 100 workers and asked them "Do you use HIT Scraper or some other script to block requesters?". Out of 100 workers 57 said Yes! That is over 50% of the work population who could never even see your work.

Using scripts to auto complete HITs is a violation of Amazons Terms of Service, but using scripts to scrape the database to find well paid work is allowed.

There are a few scripts that will allow participants to block requesters, the photo below is of HIT Scraper. HIT Scraper will scrape Mturk at designated intervals and show work available, the number of HITs available, the Turkopticon score and the amount the work pays.

If workers do not like your reputation, your pay, the amount of work required or for any reason, all they have to do is click one button and they will never see your requester account again.

There are a variety of scripts available to workers. Some calculate daily earnings, some ping when their favorite requesters post, some are complete packages to make Mechanical Turk function better, but all are useful tools to help workers maximize their time on Mturk.

How do you avoid being blocked by workers?

It really is easy.

1. Pay a fair wage - If you do not know what to pay a good rule of thumb is .15 per minute or $9 an hour.

2. Respond to worker emails

3. Test your HITs before you publish

4. If you must reject, provide sound reasons for rejecting and do not just upload a .csv file with "x" marked for the rejection.

5. Maintain a good qualification to prevent problematic participants from working for you in the future. This will help so that you do not end up rejecting the same people over and over.

Thursday, December 15, 2016

The fee list keeps growing

The custom qualification fee list keeps expanding. If you need to run a study of 1000 married participants with a bachelor's degree, Amazon will charge you $1000 PLUS 40% on payment to participants. Who has this kind of budget?

The best option is to publish your own screening HITs for $0.04 each and $0.01 per HIT Amazon fee. 6000 participants can be screened for $300 including Amazon fees. Using Unique Turker, you can lower your fee down to 20% from 40% as well. It does take a little bit longer for results because you need to publish on multiple days, but the savings can be huge.

The two most popular qualifications are not even on this list. The most requested qualifications at Mturk Data are gender and employment. These two are not on this list.

I also doubt the validity of these qualifications. People move, change jobs, get married/divorced. Will Amazon update these qualifications with changes over time? A long time qualification Mechanical Turk has used is location by state. Amazon has admitted they do not update this qualification .

Run your own qualifications, save money, get reliable results.

Here are the current fees as of 4/12/2018 for custom qualifications, (note these are per completed HIT)

Blogger: $0.25

Born 1918 to 1960 (Age 55 or older): $0.50

Born 1961 to 1971 (Age 45-55): $0.50

Born 1972 to 1981 (Age 35-45): $0.50

Born 1982 to 1986 (Age 30-35): $0.50

Born 1987 to 1991 (Age 25-30): $0.50

Born 1992 to 1999 (Age 18-25): $0.50

Borrower - Auto Loans: $0.40

Borrower - Business Loan: $0.40

Borrower - Credit Cards: $0.40

Borrower - Home Mortgage: $0.40

Borrower - Personal Loan: $0.40

Borrower - Student Loan: $0.40

Car Owner: $0.25

Current Residence - Owned: $0.40

Current Residence - Rented: $0.40

Daily Internet Usage - 1 to 4 hours: $0.30

Daily Internet Usage - 5 to 7 hours: $0.30

Daily Internet Usage - 7+ hours: $0.30

Employment Industry - Banking & Financial Services: $0.40

Employment Industry - Education: $0.40

Employment Industry - Food & Beverage: $0.40

Employment Industry - Government & Non-Profit: $0.40

Employment Industry - Healthcare: $0.40

Employment Industry - Manufacturing: $0.40

Employment Industry - Media & Entertainment: $0.40

Employment Industry - Retail, Wholesale & Distribution: $0.40

Employment Industry - Software & IT Services: $0.40

Employment Sector - Non-Profit: $0.30

Employment Status - Full time (35+ hours per week): $0.35

Employment Status - Part time (1-34 hours per week): $0.35

Employment Status - Unemployed: $0.35

Exercise - Every Day: $0.30

Exercise - Four Plus Times a Week: $0.30

Exercise - Not at All: $0.30

Exercise - Once a Week: $0.30

Exercise - Two to Three Times a Week: $0.30

Facebook Account Holder: $0.05

Financial Asset Owned - Certificate of Deposit (CD): $0.40

Financial Asset Owned - Checking Account: $0.40

Financial Asset Owned - Common Share Stocks: $0.40

Financial Asset Owned - Exchange-Traded Fund (ETF): $0.40

Financial Asset Owned - Money Market Account: $0.40

Financial Asset Owned - Mutual Funds: $0.40

Financial Asset Owned - Real Estate Investment Trusts (REITs): $0.40

Financial Asset Owned - Savings Account: $0.40

Financial Asset Owned - Stock Options: $0.40

Financial Asset Owned - U.S. Treasury Bills/Government Debt: $0.40

Gender - Female: $0.50

Gender - Male: $0.50

Google Account Holder: $0.05

Handedness - Left: $0.15

Handedness - Right: $0.15

Household Income - $100,000 or more: $0.50

Household Income - $25,000 - $49,999: $0.50

Household Income - $50,000 - $74,999: $0.50

Household Income - $75,000 - $99,999: $0.50

Household Income - Less than $25,000: $0.50

Insurance Policyholder - Auto Insurance: $0.40

Insurance Policyholder - Healthcare Insurance: $0.40

Insurance Policyholder - Home Owners Insurance: $0.40

Insurance Policyholder - Life Insurance: $0.40

Insurance Policyholder - Renters Insurance: $0.40

Job Function - Accounting & Finance: $0.40

Job Function - Administrative: $0.40

Job Function - Arts & Design: $0.40

Job Function - Education & Training: $0.40

Job Function - Engineering: $0.40

Job Function - Information Technology: $0.40

Job Function - Management: $0.40

Job Function - Marketing, Sales & Business Development: $0.40

Job Function - Operations: $0.40

Language Fluency (Basic) - Brazilian Portuguese: $1.00

Language Fluency (Basic) - Chinese Mandarin: $1.00

Language Fluency (Basic) - French: $1.00

Language Fluency (Basic) - German: $1.00

Language Fluency (Basic) - Spanish: $1.00

LinkedIn Account Holder: $0.05

Marital Status - Divorced: $0.50

Marital Status - Married: $0.50

Marital Status - Single: $0.50

Military experience: $0.30

Myspace Account Holder: $0.05

Online Purchase - Automotive Products: $0.40

Online Purchase - Baby & Kids: $0.40

Online Purchase - Books: $0.40

Online Purchase - Clothing & Shoes: $0.40

Online Purchase - Electronics & Computers: $0.40

Online Purchase - Groceries & Food: $0.40

Online Purchase - Handmade Products: $0.40

Online Purchase - Health & Beauty: $0.40

Online Purchase - Home & Garden: $0.40

Online Purchase - Jewelry: $0.40

Online Purchase - Movies: $0.40

Online Purchase - Music: $0.40

Online Purchase - Sports & Outdoor Equipment: $0.40

Online Purchase - Toys: $0.40

Online Purchase - Videogames: $0.40

Parenthood Status: $0.50

Pinterest Account Holder: $0.05

Primary Internet Device - Desktop: $0.40

Primary Internet Device - Laptop: $0.40

Primary Internet Device - Smartphone or Tablet: $0.40

Primary Mobile Device - Android: $0.50

Primary Mobile Device - iPhone: $0.50

Primary News Source - Online News (News Websites, News Apps): $0.30

Primary News Source - Podcasts: $0.30

Primary News Source - Print (Newspapers & Periodicals): $0.30

Primary News Source - Radio (AM/FM, Internet, Satellite): $0.30

Primary News Source - Social Media: $0.30

Primary News Source - TV (Late Night Comedy, Other): $0.30

Primary News Source - TV (Local/Cable News Broadcast): $0.30

Primary News Source - Word of Mouth: $0.30

Reddit Account Holder: $0.05

Single Family Home Resident: $0.30

Smoker: $0.30

Tablet Owner: $0.20

Tumblr Account Holder: $0.05

Twitter Account Holder: $0.05

US Bachelor's Degree: $0.50

US Graduate Degree: $0.65

US High School Graduate: $0.05

US Political Affiliation - Conservative: $0.40

US Political Affiliation - Liberal: $0.40

Vacation Frequency - Every Few Years: $0.30

Vacation Frequency - Every Month: $0.30

Vacation Frequency - Every Quarter: $0.30

Vacation Frequency - Every Year: $0.30

Vacation Frequency - Never: $0.30

Voted in 2012 US Presidential Election: $0.10

Voted in 2016 US Presidential Election: $0.10

YouTube Account Holder: $0.05

The best option is to publish your own screening HITs for $0.04 each and $0.01 per HIT Amazon fee. 6000 participants can be screened for $300 including Amazon fees. Using Unique Turker, you can lower your fee down to 20% from 40% as well. It does take a little bit longer for results because you need to publish on multiple days, but the savings can be huge.

The two most popular qualifications are not even on this list. The most requested qualifications at Mturk Data are gender and employment. These two are not on this list.

I also doubt the validity of these qualifications. People move, change jobs, get married/divorced. Will Amazon update these qualifications with changes over time? A long time qualification Mechanical Turk has used is location by state. Amazon has admitted they do not update this qualification .

Run your own qualifications, save money, get reliable results.

Here are the current fees as of 4/12/2018 for custom qualifications, (note these are per completed HIT)

Blogger: $0.25

Born 1918 to 1960 (Age 55 or older): $0.50

Born 1961 to 1971 (Age 45-55): $0.50

Born 1972 to 1981 (Age 35-45): $0.50

Born 1982 to 1986 (Age 30-35): $0.50

Born 1987 to 1991 (Age 25-30): $0.50

Born 1992 to 1999 (Age 18-25): $0.50

Borrower - Auto Loans: $0.40

Borrower - Business Loan: $0.40

Borrower - Credit Cards: $0.40

Borrower - Home Mortgage: $0.40

Borrower - Personal Loan: $0.40

Borrower - Student Loan: $0.40

Car Owner: $0.25

Current Residence - Owned: $0.40

Current Residence - Rented: $0.40

Daily Internet Usage - 1 to 4 hours: $0.30

Daily Internet Usage - 5 to 7 hours: $0.30

Daily Internet Usage - 7+ hours: $0.30

Employment Industry - Banking & Financial Services: $0.40

Employment Industry - Education: $0.40

Employment Industry - Food & Beverage: $0.40

Employment Industry - Government & Non-Profit: $0.40

Employment Industry - Healthcare: $0.40

Employment Industry - Manufacturing: $0.40

Employment Industry - Media & Entertainment: $0.40

Employment Industry - Retail, Wholesale & Distribution: $0.40

Employment Industry - Software & IT Services: $0.40

Employment Sector - Non-Profit: $0.30

Employment Status - Full time (35+ hours per week): $0.35

Employment Status - Part time (1-34 hours per week): $0.35

Employment Status - Unemployed: $0.35

Exercise - Every Day: $0.30

Exercise - Four Plus Times a Week: $0.30

Exercise - Not at All: $0.30

Exercise - Once a Week: $0.30

Exercise - Two to Three Times a Week: $0.30

Facebook Account Holder: $0.05

Financial Asset Owned - Certificate of Deposit (CD): $0.40

Financial Asset Owned - Checking Account: $0.40

Financial Asset Owned - Common Share Stocks: $0.40

Financial Asset Owned - Exchange-Traded Fund (ETF): $0.40

Financial Asset Owned - Money Market Account: $0.40

Financial Asset Owned - Mutual Funds: $0.40

Financial Asset Owned - Real Estate Investment Trusts (REITs): $0.40

Financial Asset Owned - Savings Account: $0.40

Financial Asset Owned - Stock Options: $0.40

Financial Asset Owned - U.S. Treasury Bills/Government Debt: $0.40

Gender - Female: $0.50

Gender - Male: $0.50

Google Account Holder: $0.05

Handedness - Left: $0.15

Handedness - Right: $0.15

Household Income - $100,000 or more: $0.50

Household Income - $25,000 - $49,999: $0.50

Household Income - $50,000 - $74,999: $0.50

Household Income - $75,000 - $99,999: $0.50

Household Income - Less than $25,000: $0.50

Insurance Policyholder - Auto Insurance: $0.40

Insurance Policyholder - Healthcare Insurance: $0.40

Insurance Policyholder - Home Owners Insurance: $0.40

Insurance Policyholder - Life Insurance: $0.40

Insurance Policyholder - Renters Insurance: $0.40

Job Function - Accounting & Finance: $0.40

Job Function - Administrative: $0.40

Job Function - Arts & Design: $0.40

Job Function - Education & Training: $0.40

Job Function - Engineering: $0.40

Job Function - Information Technology: $0.40

Job Function - Management: $0.40

Job Function - Marketing, Sales & Business Development: $0.40

Job Function - Operations: $0.40

Language Fluency (Basic) - Brazilian Portuguese: $1.00

Language Fluency (Basic) - Chinese Mandarin: $1.00

Language Fluency (Basic) - French: $1.00

Language Fluency (Basic) - German: $1.00

Language Fluency (Basic) - Spanish: $1.00

LinkedIn Account Holder: $0.05

Marital Status - Divorced: $0.50

Marital Status - Married: $0.50

Marital Status - Single: $0.50

Military experience: $0.30

Myspace Account Holder: $0.05

Online Purchase - Automotive Products: $0.40

Online Purchase - Baby & Kids: $0.40

Online Purchase - Books: $0.40

Online Purchase - Clothing & Shoes: $0.40

Online Purchase - Electronics & Computers: $0.40

Online Purchase - Groceries & Food: $0.40

Online Purchase - Handmade Products: $0.40

Online Purchase - Health & Beauty: $0.40

Online Purchase - Home & Garden: $0.40

Online Purchase - Jewelry: $0.40

Online Purchase - Movies: $0.40

Online Purchase - Music: $0.40

Online Purchase - Sports & Outdoor Equipment: $0.40

Online Purchase - Toys: $0.40

Online Purchase - Videogames: $0.40

Parenthood Status: $0.50

Pinterest Account Holder: $0.05

Primary Internet Device - Desktop: $0.40

Primary Internet Device - Laptop: $0.40

Primary Internet Device - Smartphone or Tablet: $0.40

Primary Mobile Device - Android: $0.50

Primary Mobile Device - iPhone: $0.50

Primary News Source - Online News (News Websites, News Apps): $0.30

Primary News Source - Podcasts: $0.30

Primary News Source - Print (Newspapers & Periodicals): $0.30

Primary News Source - Radio (AM/FM, Internet, Satellite): $0.30

Primary News Source - Social Media: $0.30

Primary News Source - TV (Late Night Comedy, Other): $0.30

Primary News Source - TV (Local/Cable News Broadcast): $0.30

Primary News Source - Word of Mouth: $0.30

Reddit Account Holder: $0.05

Single Family Home Resident: $0.30

Smoker: $0.30

Tablet Owner: $0.20

Tumblr Account Holder: $0.05

Twitter Account Holder: $0.05

US Bachelor's Degree: $0.50

US Graduate Degree: $0.65

US High School Graduate: $0.05

US Political Affiliation - Conservative: $0.40

US Political Affiliation - Liberal: $0.40

Vacation Frequency - Every Few Years: $0.30

Vacation Frequency - Every Month: $0.30

Vacation Frequency - Every Quarter: $0.30

Vacation Frequency - Every Year: $0.30

Vacation Frequency - Never: $0.30

Voted in 2012 US Presidential Election: $0.10

Voted in 2016 US Presidential Election: $0.10

YouTube Account Holder: $0.05

Thursday, October 13, 2016

Amazon Now Allows International Requesters Again

Amazon has changed their policy and will now start allowing some countries outside of the US to use the platform again.

This is great news for people who were removed from the platform two years ago.

Why should you still continue to use Mturk Data for your academic HIT publishing?

1. 20% Amazon fee not 40% for more than 10 requests.

2. We have published over 250,000 studies and have the experience you need to make your study run smoothly.

3. We can contact participants for longitudinal studies invite them back for additional parts. This is usually at an 85% return rate depending on time between studies.

4. We can easily send bonus payments to hundreds or thousands participants

5. Our publishing fees are low and you will still spend less than the Amazon 40% on your studies total.

6. Our reject rate is 2% or less.

7. Our accounts all have excellent ratings by participants on Turkopticon.

8. For additional fees we can target specific demographics for far less than Amazon is charging and can add an additional layer of validity to your studies.

9. We usually have studies completed the same day payment is received, so our design and publishing service is quick.

10. We help you avoid all the problems associated with not knowing the Mechanical Turk platform inside and out.

11. We make it easy for you to get the participants you need!

If you would like for us to help you with your project, please contact us at design@mturkdata.com

This is great news for people who were removed from the platform two years ago.

Why should you still continue to use Mturk Data for your academic HIT publishing?

1. 20% Amazon fee not 40% for more than 10 requests.

2. We have published over 250,000 studies and have the experience you need to make your study run smoothly.

3. We can contact participants for longitudinal studies invite them back for additional parts. This is usually at an 85% return rate depending on time between studies.

4. We can easily send bonus payments to hundreds or thousands participants

5. Our publishing fees are low and you will still spend less than the Amazon 40% on your studies total.

6. Our reject rate is 2% or less.

7. Our accounts all have excellent ratings by participants on Turkopticon.

8. For additional fees we can target specific demographics for far less than Amazon is charging and can add an additional layer of validity to your studies.

9. We usually have studies completed the same day payment is received, so our design and publishing service is quick.

10. We help you avoid all the problems associated with not knowing the Mechanical Turk platform inside and out.

11. We make it easy for you to get the participants you need!

If you would like for us to help you with your project, please contact us at design@mturkdata.com

Wednesday, August 24, 2016

The Future of Innovation - More money out of your pocket

It seems like every announcement that Amazon makes on the Mechanical Turk blog costs requesters money.

The newest dig into your pocket is for custom qualifications calling them premium qualifications.

This is a strange feature to implement when they claimed that survey requesters were only 2% of publishers when quadrupled survey prices in 2014.

Now they want more of your money. Currently the only premium qualification is for primary mobile device. They would like to charge $0.50 PER ASSIGNMENT to use this qualification.

Do not fall for this trap, just run your own qualifications. It will cost you 1/10th of the price to run your own qualifications and then you will have the list of workers FOREVER not just for one publishing.

Although it has not been announced yet, the future pricing could be $0.50 per premium qualifiaction.

Age = $0.50

Gender = $0.50

Education= $0.50

Employment = $0.50

Right away you are out $2 per survey without even paying participants a penny!

It is best to do it yourself.

Make a simple study and pay participants a small fee for their data. Then simply contact the participants that fit your demographic criteria when you need them.

The 40% Amazon fees are still higher than total fees using Mturk Data and us doing the hard work for you.

If you do need a custom qualification and do not know how to manage it, we can do that for you at significantly less than what Amazon is charging.

The newest dig into your pocket is for custom qualifications calling them premium qualifications.

This is a strange feature to implement when they claimed that survey requesters were only 2% of publishers when quadrupled survey prices in 2014.

Now they want more of your money. Currently the only premium qualification is for primary mobile device. They would like to charge $0.50 PER ASSIGNMENT to use this qualification.

Do not fall for this trap, just run your own qualifications. It will cost you 1/10th of the price to run your own qualifications and then you will have the list of workers FOREVER not just for one publishing.

Although it has not been announced yet, the future pricing could be $0.50 per premium qualifiaction.

Age = $0.50

Gender = $0.50

Education= $0.50

Employment = $0.50

Right away you are out $2 per survey without even paying participants a penny!

It is best to do it yourself.

Make a simple study and pay participants a small fee for their data. Then simply contact the participants that fit your demographic criteria when you need them.

The 40% Amazon fees are still higher than total fees using Mturk Data and us doing the hard work for you.

If you do need a custom qualification and do not know how to manage it, we can do that for you at significantly less than what Amazon is charging.

Saturday, May 28, 2016

Due to an Amazon Mechanical Turk temporary system outage

Due to an Amazon Mechanical Turk temporary system outage that has now lasted well over two days, we are unable to publish any of the work that is getting backlogged over the long holiday weekend. We would love to be enjoying the holiday, but we are waiting as many of you are to get this work published and try to keep our customers happy.

Over the last week we have experienced numerous issues with the platform until its full failure on Thursday.

We apologize to those of you who rely on Mechanical Turk for income, but our hands are tied. We have plenty of work, just no way to publish it. We also lost a couple of our larger clients because they needed work quickly and we could not provide it.

Thanks for being great workers and participants and hopefully this will be restored sometime this month or maybe the beginning of June? Temporary apparently means days for Amazon.

And also thank you Mechanical Turk for "Bringing Future Innovation to Mechanical Turk"

Over the last week we have experienced numerous issues with the platform until its full failure on Thursday.

We apologize to those of you who rely on Mechanical Turk for income, but our hands are tied. We have plenty of work, just no way to publish it. We also lost a couple of our larger clients because they needed work quickly and we could not provide it.

Thanks for being great workers and participants and hopefully this will be restored sometime this month or maybe the beginning of June? Temporary apparently means days for Amazon.

And also thank you Mechanical Turk for "Bringing Future Innovation to Mechanical Turk"

at two to four times the price it was last year without a platform for us to work on.

Wednesday, May 18, 2016

New International Participants are Being Accepted

Earlier this month reports started coming in that Amazon had lifted the ban on new international workers. Multiple threads have been posted on many worker forums confirming that Amazon has now accepted dozens and possibly hundreds of dormant accounts that have been pending since 2012.

Overall, we believe this will have a minimal impact on Mturk as a whole. For academic studies to target individual countries other than the United States and India, there would have to be thousands of new registrations and participants would have to be able to get cash from Mturk.

For people outside of the USA and India, Amazon Mechanical Turk still only pays workers with gift card credits on Amazon.com.

This influx of international workers could initially help requesters like ScoutIt aka Jon Brelig complete tens of thousands of $1-$2 per hour, but for the most part, it will have no impact on the experienced requester or worker.

Hopefully new participants studied the participation agreements they signed when the submitted their accounts for approval and understand they will not be paid in cash.

Overall, we believe this will have a minimal impact on Mturk as a whole. For academic studies to target individual countries other than the United States and India, there would have to be thousands of new registrations and participants would have to be able to get cash from Mturk.

For people outside of the USA and India, Amazon Mechanical Turk still only pays workers with gift card credits on Amazon.com.

This influx of international workers could initially help requesters like ScoutIt aka Jon Brelig complete tens of thousands of $1-$2 per hour, but for the most part, it will have no impact on the experienced requester or worker.

Hopefully new participants studied the participation agreements they signed when the submitted their accounts for approval and understand they will not be paid in cash.

Tuesday, January 19, 2016

How to Pay Less than 20%, Minimum Pricing on Mechanical Turk

When Amazon changed their pricing structure in July, a lot of the focus was on the huge commission increase directed towards Academic requesters. Academic requesters were not the only one's impacted, everyone using the platform was hit with huge price increases.

One very small group of requesters actually saw a price decrease. Requesters who were using the "Masters" qualifications had their fees reduced from 30% to 25%. But for everyone else on the Mechanical Turk platform, July 21st, 2015 was a difficult day requiring hard budgetary decisions.

These fractions of cents may seem insignificant to a requester who only needs to publish 100 HITs or is only publishing a one-time batch, but as the number of HITs increases and the number of times the batches are published increases, the costs really begin to add up.

There are some loopholes in the minimum pricing structure because of rounding rules in their pricing structure.

From the Amazon pricing page

These rounding rules will allow you to get the most out of your Mturk HIT publishing.

Let's address some optimum payments and optimum costs so that you actually pay Amazon the minimum amount possible for the maximum number of responses.

We are not going to discuss publishing HITs at $0.00 or $0.01 because both of those payments are unethical and paying 100% commission is bad business. We are also not going to discuss minimum payments using "Masters" qualification because it is an unnecessary up-charge that can be eliminated using the proper qualifications.

Yes, increasing payment to reduce commission is a fools game unless it is done wisely.

If you have a rate the photo task and you are paying $0.02 per photo, a 50% commission is paid to Amazon for each task.

It is better to design your HIT where the worker rates 3 photos per HIT and you pay .06 to reduce your commission down to 16.6% as opposed to the 50% commission you would pay with a single photo in each task.

One very small group of requesters actually saw a price decrease. Requesters who were using the "Masters" qualifications had their fees reduced from 30% to 25%. But for everyone else on the Mechanical Turk platform, July 21st, 2015 was a difficult day requiring hard budgetary decisions.

These fractions of cents may seem insignificant to a requester who only needs to publish 100 HITs or is only publishing a one-time batch, but as the number of HITs increases and the number of times the batches are published increases, the costs really begin to add up.

There are some loopholes in the minimum pricing structure because of rounding rules in their pricing structure.

From the Amazon pricing page

What rounding rules are applied when calculating fees?

After the fee is calculated, we round half up amounts greater than $0.01 – we round down if the fractional amount is less than half a penny, and round up otherwise. For example, $0.104 is rounded to $0.10, $0.105 is rounded to $0.11, and $0.108 is rounded to $0.11.

These rounding rules will allow you to get the most out of your Mturk HIT publishing.

Let's address some optimum payments and optimum costs so that you actually pay Amazon the minimum amount possible for the maximum number of responses.

We are not going to discuss publishing HITs at $0.00 or $0.01 because both of those payments are unethical and paying 100% commission is bad business. We are also not going to discuss minimum payments using "Masters" qualification because it is an unnecessary up-charge that can be eliminated using the proper qualifications.

Yes, increasing payment to reduce commission is a fools game unless it is done wisely.

If you have a rate the photo task and you are paying $0.02 per photo, a 50% commission is paid to Amazon for each task.

It is better to design your HIT where the worker rates 3 photos per HIT and you pay .06 to reduce your commission down to 16.6% as opposed to the 50% commission you would pay with a single photo in each task.

Tuesday, September 1, 2015

Status after #Mturkgate

We have seen a moderate decrease in requests because of the increased commission structure from Amazon in July.

We want to assure everyone that the 40% commission does not apply to MturkData. Since July we have extensively tested different publishing techniques that will keep the Amazon commission at 20% instead of the 40% that Amazon wants to charge academic requesters. Unique users are guaranteed across multiple publishings and over extended periods of time.

We know you have a lot of choices when it comes to sourcing participants and there are multiple platforms available for you to use. We do the hard work for you and will help guide you through the process to ensure that you have usable data for your research.

We want to assure everyone that the 40% commission does not apply to MturkData. Since July we have extensively tested different publishing techniques that will keep the Amazon commission at 20% instead of the 40% that Amazon wants to charge academic requesters. Unique users are guaranteed across multiple publishings and over extended periods of time.

We know you have a lot of choices when it comes to sourcing participants and there are multiple platforms available for you to use. We do the hard work for you and will help guide you through the process to ensure that you have usable data for your research.

Wednesday, July 22, 2015

Moving Forward with Mechanical Turk - To Participants

Hi all,

As most of you are aware, Amazon increased fees yesterday. For academic requesters this is a pretty big deal because Amazon singled them out to pay a 40% commission on hits from the previous 10%.

If for some reason they want to use masters (which even after two years the system defaults to), the fee is 45%.

We have published surveys for over 100 different academics last year, we work with at least a dozen requesters on Mturk who publish surveys and we work with many commercial customers as well. We are all hit hard by this and as you have seen there has been a massive exodus of large requesters from Mturk.

For commercial customers, the fee increased to 20%, which is not that big of a deal, but for academics to have a fee increased to 40%, it is huge.

Let's consider that an academic wants to publish (500) 15 minute studies and wants to pay workers $2.50 per study.

Under the old pricing, they would pay Amazon $125, now they would have to pay Amazon $500.

There is a way around this fee though and that is to publish HITs as batches. We are working with others and sharing our code to help all of us only pay the 20% commission that is available to batch requesters.

Basically you set up your HIT as a batch with the .csv file having the survey link in it. You insert a no repeat javascript into the code so that although there may be 500 hits available, you can only accept one.

I am sure a lot of you have seen this in Sergey hits or hits from multiple requesters where it says something like "You have already accepted the maximum number of HITs for this requester, please return", this is what will be the new way to publish surveys without paying the horrendous fee from Amazon. If you accept two HITs, you will have to return one.

We all know that return rate "can" be used as a qualification using the API, but we also all know it rarely is because there is no point to it. Returning a HIT is not that big of a deal and is far better than being rejected for taking a HIT multiple times and it is also (in our opinion) acceptable so that we can transfer a fair wage to participants as opposed to giving that money to Amazon.

I just want to make everyone aware that this is a necessity. In order to pay a fair wage, we cannot pay Amazon a 40% commission when others are being charged half of that and other platforms are much less expensive. I understand that there are many requesters who do not pay a fair wage on Mturk and this is not going to help with the overall struggle, but we are going to continue pricing our HITs at $9 minimum. If the customers go to other platforms, so be it, at least we know we are doing the right thing and behaving in an ethical manner and from the tens of thousands of HITs we have published in the last year, we know that all of our academic customers feel the same way.

As most of you are aware, Amazon increased fees yesterday. For academic requesters this is a pretty big deal because Amazon singled them out to pay a 40% commission on hits from the previous 10%.

If for some reason they want to use masters (which even after two years the system defaults to), the fee is 45%.

We have published surveys for over 100 different academics last year, we work with at least a dozen requesters on Mturk who publish surveys and we work with many commercial customers as well. We are all hit hard by this and as you have seen there has been a massive exodus of large requesters from Mturk.

For commercial customers, the fee increased to 20%, which is not that big of a deal, but for academics to have a fee increased to 40%, it is huge.

Let's consider that an academic wants to publish (500) 15 minute studies and wants to pay workers $2.50 per study.

Under the old pricing, they would pay Amazon $125, now they would have to pay Amazon $500.

There is a way around this fee though and that is to publish HITs as batches. We are working with others and sharing our code to help all of us only pay the 20% commission that is available to batch requesters.

Basically you set up your HIT as a batch with the .csv file having the survey link in it. You insert a no repeat javascript into the code so that although there may be 500 hits available, you can only accept one.

I am sure a lot of you have seen this in Sergey hits or hits from multiple requesters where it says something like "You have already accepted the maximum number of HITs for this requester, please return", this is what will be the new way to publish surveys without paying the horrendous fee from Amazon. If you accept two HITs, you will have to return one.

We all know that return rate "can" be used as a qualification using the API, but we also all know it rarely is because there is no point to it. Returning a HIT is not that big of a deal and is far better than being rejected for taking a HIT multiple times and it is also (in our opinion) acceptable so that we can transfer a fair wage to participants as opposed to giving that money to Amazon.

I just want to make everyone aware that this is a necessity. In order to pay a fair wage, we cannot pay Amazon a 40% commission when others are being charged half of that and other platforms are much less expensive. I understand that there are many requesters who do not pay a fair wage on Mturk and this is not going to help with the overall struggle, but we are going to continue pricing our HITs at $9 minimum. If the customers go to other platforms, so be it, at least we know we are doing the right thing and behaving in an ethical manner and from the tens of thousands of HITs we have published in the last year, we know that all of our academic customers feel the same way.

Tuesday, June 23, 2015

Amazon Deals a Devastating Blow to Academic Requesters and Workers

Amazon sent out emails to all requesters last night

detailing their new fee structure.

Although in the original blog post “Bringing Future Innovation toMechanical Turk” stated that the commission increase would be a maximum of 35%,

they actually raised it to a whopping 40% for academic requesters. Most requesters will see a 20% commission

structure but for any requester posting 10 or more HITs they are adding an

additional 20% on top of the base fee.

Along with this fee increase, they devalued their “master”

workers and reduced the fee from 30% to 25% and with the increase in base fee

to 20%, this further reduced the value of this “elite” qualification.

Amazon stated in the email to requesters that the 40% fee is

only going to affect .3% of all work published on Mturk. This may be correct,

but it is easy to skew that number when Speechpad is posting almost a quarter

of a million dollars of HITs in a month and CastingWords is at 44k. What happens to that .3% when you remove

almost 300k of HITs posted? What happens to Amazon Mechanical Turk when those

two requesters pull out on their own platforms because they do not want to pay

DOUBLE what they have been paying for many years?

Amazon raising their fees somewhat is not that big of a deal, but to gouge specific

requesters is unconscionable. Survey requesters are

not using more resources, nor are they a huge impact on their platform according

to Amazon. How is this additional fee justifiable?

Simply stated, it is not. There are a half dozen other

platforms available where we can publish surveys for the same price or less.

For requesters who are using Mturk as a source for slave labor and paying workers less than $2 per hour, these rate hikes are insignificant. Decent requesters who value their workforce and support ethical pay, this is a huge amount of money that will have to be accounted for.

Thursday, May 21, 2015

How to destroy Amazon Mechanical Turk 101

http://mechanicalturk.typepad.com/blog/2015/05/bringing-future-innovation-to-mechanical-turk.html

Quoted from the Amazon Mechanical Turk blog today....

This will either further reduce payments to workers, or it will force requesters into finding other platforms like CrowdFlower to post work on. The minor changes in coding they made this year with qualifications is hardly a justification for raising the fee 100-250%.

Amazon decimated the international participant pool in 2012 by not allowing any new international worker accounts. It is almost impossible to publish any studies dealing with locations outside of the United States with the exception of India. Then in August of this year they removed a great source of income by basically banning all international requesters.

This is a sad day for both workers and requesters on Mturk. I guess we will see how this plays out in June, but judging by past Amazon decisions, I am not optimistic.

Quoted from the Amazon Mechanical Turk blog today....

Examining our Commission Structure

To continue growing Mechanical Turk, we are analyzing possible changes to our commission structure for Requesters. These changes would be intended to allow us to increase our investment in the marketplace and bring future innovation to Mechanical Turk that will benefit both Requesters and Workers. Mechanical Turk has not changed its commission structure since launch in 2005. As part of this, we are considering changing our base commission to somewhere between 20% and 35%. Today, our base commission is 10%. We will share the outcome of this analysis in June.This will either further reduce payments to workers, or it will force requesters into finding other platforms like CrowdFlower to post work on. The minor changes in coding they made this year with qualifications is hardly a justification for raising the fee 100-250%.

Amazon decimated the international participant pool in 2012 by not allowing any new international worker accounts. It is almost impossible to publish any studies dealing with locations outside of the United States with the exception of India. Then in August of this year they removed a great source of income by basically banning all international requesters.

This is a sad day for both workers and requesters on Mturk. I guess we will see how this plays out in June, but judging by past Amazon decisions, I am not optimistic.

Thursday, May 7, 2015

If you needed more proof that Amazon Master Quaification is useless and arbitrary....

Taken from Reddit so validity is questionable, but these are posts from the latest round of workers given the mysterious Amazon Master Qualification.

Workers with 1800-337 approved HITs received masters qualification according to these posts.

These are the workers that Amazon describes as elite and charge requesters a premium of 30% to utilize.

My intention is not to bash these workers nor imply they are doing poor quality work, but they are inexperienced and once again this shows that there needs to be some transparency behind this qualification if Amazon feels they can justify requesters paying 200% more to utilize them.

Standard Amazon fees are 10%, and honestly, I will not pay Amazon 30% to use a worker with only 1800 or 300 HITs under their belt.

We have published over 200 academic studies with 9500 unique participants over 40,000 HITs with a 99% approval rate and have never once paid the additional fee for Master workers. Infact, our total costs for publishing for academics is 28% on payment which is below Amazon's fee for Master workers.

Utilizing qualifications, proper HIT design, and testing will eliminate the need for any kind of Master worker and inflated fees for these participants. It is far better to pay the participants directly for great work than pay it in fees to Amazon.

Workers with 1800-337 approved HITs received masters qualification according to these posts.

These are the workers that Amazon describes as elite and charge requesters a premium of 30% to utilize.

My intention is not to bash these workers nor imply they are doing poor quality work, but they are inexperienced and once again this shows that there needs to be some transparency behind this qualification if Amazon feels they can justify requesters paying 200% more to utilize them.

Standard Amazon fees are 10%, and honestly, I will not pay Amazon 30% to use a worker with only 1800 or 300 HITs under their belt.

We have published over 200 academic studies with 9500 unique participants over 40,000 HITs with a 99% approval rate and have never once paid the additional fee for Master workers. Infact, our total costs for publishing for academics is 28% on payment which is below Amazon's fee for Master workers.

Utilizing qualifications, proper HIT design, and testing will eliminate the need for any kind of Master worker and inflated fees for these participants. It is far better to pay the participants directly for great work than pay it in fees to Amazon.

Wednesday, March 4, 2015

FAQ for Mturk Data International Amazon Mechanical Turk Survey Publishing

A few frequently asked questions about publishing academic studies on Amazon Mechanical Turk with Mturk Data.

1. How long does it take for results?

Usually you will see your studies completed in less than 24 hours. Sometimes larger studies of over 500 participants with specific qualifiers will take a little bit longer, but usually 24 hours is very realistic.

2. Do I need an account with Qualtrics, Survey Monkey or some other publisher?

No, but using those platforms will ensure you have you receive your data directly and it is also less expensive because it is less time consuming from our end.

3. Do I need a PayPal account to pay?

No, our invoices are sent via PayPal, but you can pay the invoice using Master Card, Visa, American Express, Discover or PayPal if you would like.

4. Will you send me documentation for the university?

Yes, we will send you an itemized invoice showing what you are paying for along with screenshots of the HITs to be published. When the study is complete, we will also send you a .csv file we receive from Amazon showing participant's anonymous worker ID's to validate completion.

5. What do you need in our survey before publishing?

We need a single input area for the worker to input their worker ID so we can cross verify their submission on Amazon Mechanical Turk to participation in your study.

6. Do you only publish surveys for universities that are outside of the United States?

We will publish for any university or individual no matter where they are located.

7. How do I pay participants?

Once the studies are completed and we verify that all submissions on Mturk submitted studies to you, then we (Mturk Data) release payment to participants.

8. The screenshot you sent me has a link to "Guidelines for Academic Requesters". What is this and why is it in the HIT?

Many academics have to submit their studies to the university IRB for ethical approval prior to releasing their studies. The Dynamo guidelines were written by Turkers to help provide academics with additional information on how participants should be treated. We try our best to follow these guidelines because it is the right thing to do. Please read this document and sign it if you get a chance.

9. Do you work with any commercial clients?

Yes, but please keep in mind that the pricing on our website http://mturkdata.com only applies to academic customers. Commercial customers usually require more complex designs and are more time consuming. Send us an email at design@mturkdata.com with your request.

Tuesday, February 10, 2015

Do NOT Waste Money on Amazon Promoted HITs

EDIT: Within 48 hours, Amazon pulled down this advertisement.

Yesterday workers were greeted by an annoying new advertisement at the top of all Mturk pages.

The "inquire here" link leads to this PAGE where requesters can promote specific HITs or receive help from the Mechanical Turk team for a price.

Not even 24 hours after this new intrusive advertisement was released on Amazon Mechanical Turk, workers have found many ways to eliminate it from their browsers and will never see the Ad that you are paying good money for. The easiest way to block it is by using a very common browser plugin called Adblock Plus. This simple browser plugin removes this intrusive advertisement from the workers pages.

Workers are very resourceful and come from a wide variety of backgrounds, and less than 12 hours after Amazon unleashed this ad, workers had updated their scripts like Turking Tools to remove this banner from their screens.

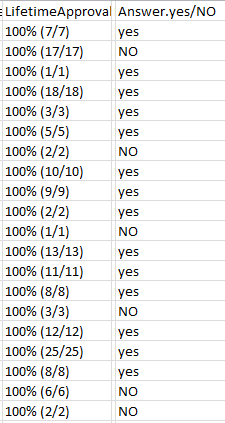

We ran 20 HITs to see if it will be an effective way to increase visibility of specific HITs. We asked workers "Have you blocked the new Amazon spam ad yet? yes/NO"

This is the result from 20 workers.

A little over 24 hours after this ad appeared, 15 out of 20 workers have blocked it and will never see it.

Why would workers block something that would help them find work?

The answer is simple, if it is really "work" and pays a fair wage, there is no need to promote it. The work gets completed quickly and promotion is unnecessary.

Friday, January 30, 2015

The "X" Rejection and Feedback on HITs

Amazon is pretty clear on how to reject workers using both the GUI and the API. When rejecting work in the GUI, Amazon forces requesters to put a reason for the rejection. When rejecting offline using downloaded .csv files, requesters do not have to input a reason for the rejection. Amazon docs state to use an "X" to approve workers in the column for approval and then upload the .csv file to approve all. They even provide a photo to guide requesters on how to do this.

We all try our best to avoid rejecting workers unless it is absolutely necessary. It can lower a workers approval percentage which can have an impact on their ability to perform future work. If you have to reject a worker, a detailed explanation for the rejection will help the worker improve in the future and also help protect your reputation on requester review sites.

If you want to communicate with a worker, putting a comment in the approval column will probably never be seen. This is a screenshot of the worker dashboard.

In the reject column, we even can see that a reason is given for the rejection. Some requesters just use an "X" to reject in the same way they approve. This is really a bad idea. These are some posts from today from a requester review site Turkopticon.

If you want to communicate with a worker, putting a comment in the approval column will probably never be seen. This is a screenshot of the worker dashboard.

With over 24K submitted HITs, it is highly unlikely that the worker would ever see any message left in the approval column. But the one rejection, the worker will definitely take a look at that.

Here is the message left with the one rejection on Jan. 19th.

The feedback column is shown on both rejected and approved HITs, but the worker is unlikely to ever see feedback on approvals. This feedback on the rejection was unclear and did not help in improving work quality. I am not advocating rejecting workers in order to communicate with them, because you can do that through the API and also through the bonus feature in the GUI.

If you do have to reject, give a detailed reason for the rejection because neither worker nor requester will benefit from an "X".

Wednesday, January 14, 2015

Some Staticstical Evidence On The Poor Quality Of Masters

Spamgirl from Turkernation ran a small study on the effectiveness of master workers. I thought is was pretty interesting and asked her if I could republish it here.

Quick study: Masters is garbage

I ran a small, quick study last night comparing the quality of three groups based on a fairly complex task. The task worked like this: a fairly exhaustive list of categories was presented, followed by instructions which said you had to come up with three new categories to add to the list. They could not be duplicates, and they shouldn't be too broad nor too narrow. The pay was $4-5 per hour, but bonuses would be given for good, thoughtful answers, doubling that pay. Here are the results:

Group one: 98% / 10,000 Approved qualifications

They completed all 10 hits in 1 hour and 27 minutes

One worker gave three terrible answers (10%)

Out of all 30 answers, only one was a terrible suggestion (3%)

Out of all 30 answers, six were duplicates of those in the instructions (20%)

Group two: Masters qualification

They completed 6 out of 10 HITs in 13 hours and 30 minutes

Two workers gave three terrible answers (33%)

Out of all 18 answers, three were a terrible suggestion (17%)

Out of all 18 answers, five were duplicates of those in the instructions (28%)

Group three: long-standing private qualification

They completed 5 out of 10 HITs in 13 hours and 30 minutes

NO workers gave three terrible answers (0%)

Out of all 15 answers, NONE were a terrible suggestion (0%)

Out of all 15 answers, three were duplicates of those in the instructions (20%)

So, as you can see, both the private qualification and 98%/10k qualifications outperformed Masters. If you have an easy task and want it done quickly, and are willing to throw away low quality answers, then 98%/10k is a good qualification requirement for your work. If you'd like high quality, thoughtful answers, or have a complex task, a private qualification is likely your best bet.

I didn't include this in my data as it is subjective, but the overall quality of the answers provided by the private qualification were far superior to those from 98%/10k. While the open qualification HITs' answers were original, they were often too broad or too narrow, but you could tell they tried so I didn't rate those answers as "terrible". If you are looking for high quality, nothing beats a private qualification. You can also use someone else's qualification on your HITs, if you use the API, so you don't even have to come up with your own private group. I'd recommend the Elite Proletariat qualification if you want some great workers.

Wednesday, December 3, 2014

Amazon's Mechanical Turk workers protest: 'I am a human being, not an algorithm'

Amazon's Mechanical Turk workers protest: 'I am a human being, not an algorithm'

Really good article on TheGuardian about Dynamo was published today.

Please contact design@mturkdata.com for your HIT publishing needs.

Wednesday, November 5, 2014

Amazon Accepted a Few International Masters

Amazon finally accepted a few international workers for their prized masters qualification yesterday. This is both good and bad. It is great that a few workers were given this qualification after completing hundreds of thousands of HITs, it is bad that along with those international workers, Amazon also accepted a bunch of worthless workers to water down this mystery qualification.

Here is some information on who just recieved the prized masters qualification along with some excellent international workers -

1) Turkers with 3,000 HITs completed got it. You can do that on day 11, and only because the first 10 days are restricted to 100 HITs per day.

2) Turkers with high 80 percent averages got it.

3) Today, people who don't even Turk anymore got it. Multiple people.

Requesters, save your money, do not spend that 30% fee on this useless qualification and set up your own custom qualifications for the standard 10% fee.

Here is some information on who just recieved the prized masters qualification along with some excellent international workers -

1) Turkers with 3,000 HITs completed got it. You can do that on day 11, and only because the first 10 days are restricted to 100 HITs per day.

2) Turkers with high 80 percent averages got it.

3) Today, people who don't even Turk anymore got it. Multiple people.

Requesters, save your money, do not spend that 30% fee on this useless qualification and set up your own custom qualifications for the standard 10% fee.

Wednesday, September 17, 2014

Turkers are People, not Labrats or Turkeys

It is disappointing to see that this is the way workers are viewed by some requesters.

With her research focused on sarcasm, I would hope that this was meant to be a joke of some kind, but it really comes off as derogatory and insulting. If her research is to be taken seriously and her studies are to be considered valid, I would think that she would want human beings to preform her tasks as opposed to "turkeys".

http://rachelrakov.ws.gc.cuny.edu/ https://twitter.com/ssdd3

In the past couple of years we have published information to help both new and old requesters understand the workings of Mturk and Amazon. We have tried to give them an idea of what it is like to be a worker and how to ensure they receive quality results from a broken system that is Amazon Mechanical Turk. Tweets like this just make it hard to move forward.

With her research focused on sarcasm, I would hope that this was meant to be a joke of some kind, but it really comes off as derogatory and insulting. If her research is to be taken seriously and her studies are to be considered valid, I would think that she would want human beings to preform her tasks as opposed to "turkeys".

http://rachelrakov.ws.gc.cuny.edu/ https://twitter.com/ssdd3

In the past couple of years we have published information to help both new and old requesters understand the workings of Mturk and Amazon. We have tried to give them an idea of what it is like to be a worker and how to ensure they receive quality results from a broken system that is Amazon Mechanical Turk. Tweets like this just make it hard to move forward.

Subscribe to:

Posts (Atom)